We’ll build a SageMaker endpoint using an ml.c5.2xlarge instance on AWS.This instance holds 8 vCPUs, 16GB of RAM, and costs 0.408 per/hour as of June 2022.Please help understand if there is a reason. AWS launched Amazon Sagemaker in 2017 that helps developers build Machine Learning models with No prior knowledge. I thought there would be a resource to define a SageMaker Estimator in CloudFormation, but looks there is none.ģ-1. Can SageMaker Estimator be re-created using the model data in S3 bucket? SageMaker Estimator What is the equivalent in SageMaker as AWS::SageMaker::Model has no argument to refer to a data in a S3 bucket?Ģ-2. If the bucket with the specific name does not exist, the estimator creates the bucket during the fit() method execution.įor Python ML environment, we can use pickle to export the data and reload back into a model as in 3.4. If not specified, results are stored to a default bucket.

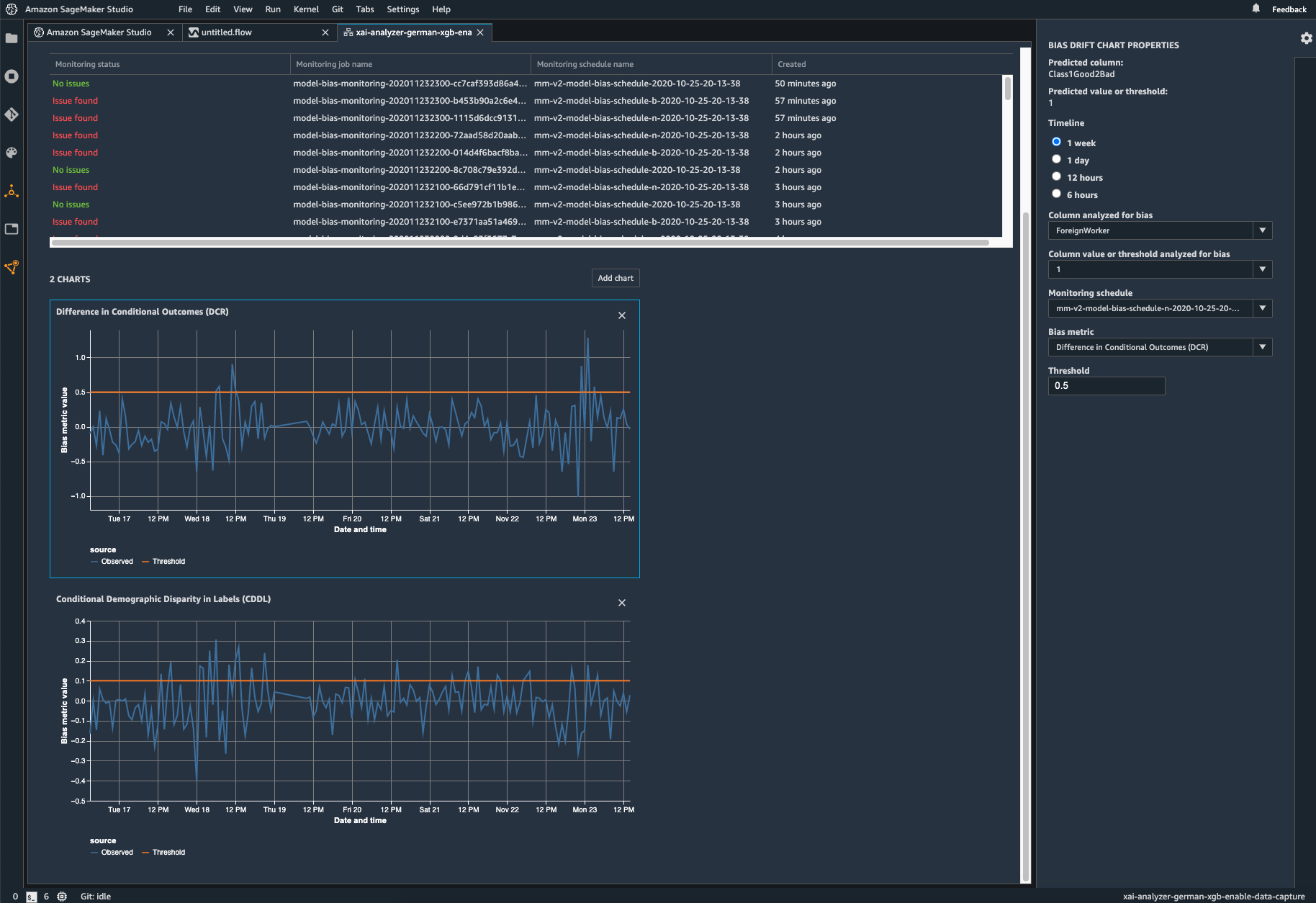

Top 100 AWS Certified Cloud Practitioner Exam Preparation Questions. S3 location for saving the training result (model artifacts and output files). Tags ML model predictions and biases with Amazon SageMaker Clarify. The SageMaker Estimator has an argument output_path as in Python SDK Estimators. SageMaker Endpoint or SageMaker Estimator from a model data in S3 At best, SageMaker claiming that Clarify detects bias across the entire ML workflow is a reflection of the gap between marketing and actual value creation. Does AWS call the runtime (docker runtime + docker image for inference) as Model?ĪWS::SageMaker::Model Type: AWS::SageMaker::Model Is it a docker image (algorithm) to do the prediction/inference, not the data to run the algorithm on?ġ-3. Hence wonder why it is called AWS::SageMaker:: Model and why not called such as AWS::SageMaker:: InferenceImage.ġ-2. Example notebook: Monitoring bias drift and feature attribution drift Amazon SageMaker Clarify Namespace: aws/sagemaker/Endpoints/explainability-metrics. There is no reference to the model data in a S3 bucket.

#Sagemaker clarify code

However, AWS::SageMaker::Model seems to have captured a docker image to run the inference code in a SageMaker endpoint instance. When looked at the diagram in the Deploy a Model on Amazon SageMaker Hosting Services, the Model artifacts in SageMaker is the data generated by a ML algorithm docker container in the Model training phase, and stored in a S3 bucket. Would like to clarify the entities in AWS::SageMaker.